Generative Art

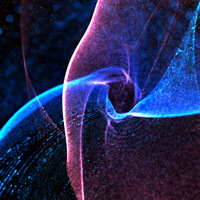

Algorithms that make decisions based on simple mathematical rules such as attraction, noise, repulsion, etc. interact to create unique emergent complexity in each session.

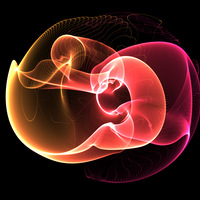

Learn moreLumicles is a real-time 3D particle simulation software, based on GPU, developed by Federico Marino that runs directly in the web browser. The project, which began development in 2016, allows users to explore clouds of millions of colored particles that react to dynamic force fields described by algorithms and mathematical functions written in GLSL. It offers an immersive audiovisual experience, combining 3D audio and visualization in both desktop mode and Virtual Reality systems (via WebXR).

Algorithms that make decisions based on simple mathematical rules such as attraction, noise, repulsion, etc. interact to create unique emergent complexity in each session.

Learn moreUp to 4 million particles computed at 60 fps via WebGL and GLSL shaders. A 3-pass rendering pipeline applies post-processing filters.

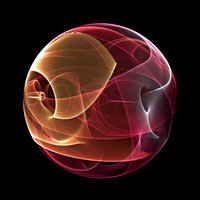

Learn moreWebXR allows accessing the simulation from any VR viewer without installing software. The user navigates the particle cloud with controllers and adjusts parameters from an in-VR menu.

Learn moreEach sound source is a physical object with an exact position in 3D space. The Web Audio API generates a dynamic soundtrack that varies with the simulation and user movement.

Learn moreIn 2019, the Lumicles project was exhibited at the Centro Cultural Kirchner in Buenos Aires, as part of the National Art and Technology 2018 competition. The installation allowed visitors to experience the simulations in Virtual Reality using an Oculus Rift headset.

In 2025, as part of The Night of the Museums 2025, the project was exhibited at the Faculty of Engineering of the University of Buenos Aires (Las Heras campus).

1 de 1